01 The question we're answering

When a commercial property sells, the county usually reassesses it. The new taxable value — and therefore the new tax bill — depends on local rules, prior assessments, and the price paid. The Tax Estimator answers a single question:

“If this property sold today at price V, what would the county tax it next year?”

The estimator returns three things: a point estimate of the total tax bill, a likely range around it, and a step-by-step breakdown of how the answer was assembled. It is built from observed behavior on recent comparable sales, not from county valuation formulas.

02 End-to-end flow

The total tax bill is the sum of two pieces: an ad valorem tax (driven by assessed value × millage rate) and non-ad-valorem assessments (fixed jurisdictional fees — fire, drainage, lighting, etc.).

The only quantity the estimator predicts is the tax assessment ratio — the fraction of the sale price that the county will treat as taxable. Millage rates and non-ad-valorem assessments are looked up directly from the county's parcel and jurisdictional records. Most of this page is about how we predict the ratio.

03 Why we predict a ratio, not a dollar amount

A $20M industrial property and a $2M industrial property pay dramatically different tax bills. But the ratio of taxable value to sale price across qualified, arms-length sales tends to cluster within a tight band for properties in the same county and asset class. That makes the ratio a far stabler quantity to model than the dollar bill itself.

Once we have a predicted ratio, recovering dollars is mechanical:

Taxable Value = Predicted Ratio × Sale Price

Ad Valorem Tax = Taxable Value × Millage Rate

Total Tax Bill = Ad Valorem Tax + Non-Ad-Valorem

04 Building the comparable-sales pool

For each estimate, the engine assembles a pool of qualified arms-length sales that share the subject's county and asset class (office, retail, industrial, multifamily, etc.) within the last three years. Each comp contributes two numbers:

- The comp's tax assessment ratio — what we

ultimately want to predict for the subject. Computed as

taxable_after_sale ÷ sale_price. - The comp's pre-sale ratio — a similarity

coordinate, computed as

prior-year market value ÷ sale price. Think of this as "how stale was the previous assessment relative to where the property actually trades?"

The pool is filtered to remove anomalies — comps whose ratio falls outside [0.30, 1.50] are dropped as data errors. The estimator currently covers Palm Beach, Broward, and Miami-Dade. A typical industrial subject in a well-covered county draws on hundreds of comps; thinner classes (hospitality, special-purpose) and newer-onboarding counties typically have 20–50.

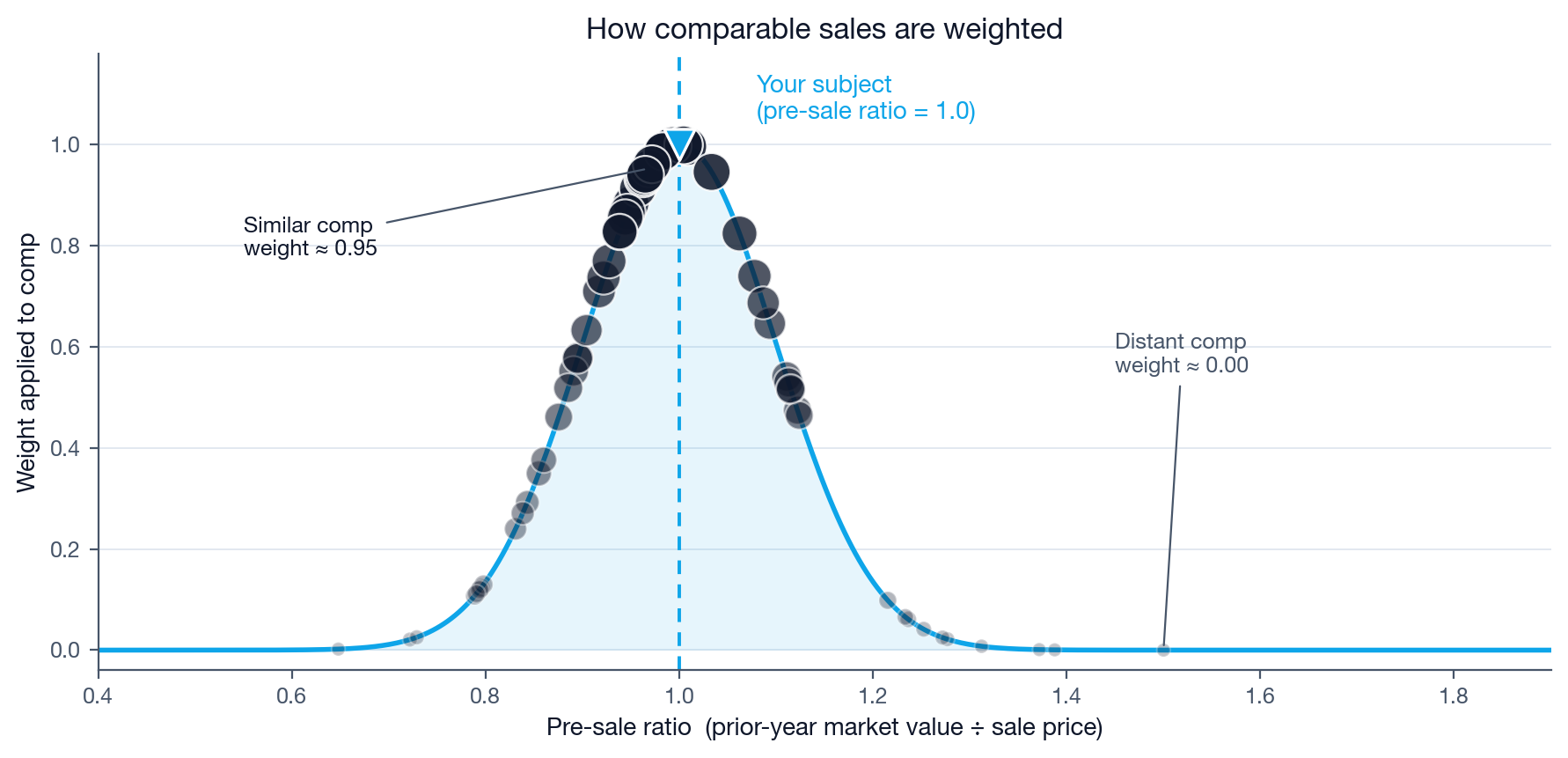

05 The kernel method — weighting by similarity

Not every comp deserves an equal vote. A property whose prior assessment was 95% of its eventual sale price tells us something very different about post-sale reassessment than one whose prior assessment was only 40% of its sale price. Counties tend to reassess more aggressively after a sale when the prior assessment looks stale — so the pre-sale ratio is the single strongest signal of post-sale behavior.

The kernel method gives every comp a weight between 0 and 1 based on how close its pre-sale ratio is to the subject's. Comps right at the subject's pre-sale ratio receive full weight; comps far from it fade smoothly toward zero.

The width of the bell — the "bandwidth" — is tuned offline per county and asset class so that the resulting predictions track historical truth as tightly as possible. The tuning step also measures how much the kernel actually improves on a simple median in each (county, class) — that measurement drives the warnings discussed in §07 and §09 below, so the estimator only flags flat-mode predictions as a problem in the counties and classes where the kernel demonstrably helps.

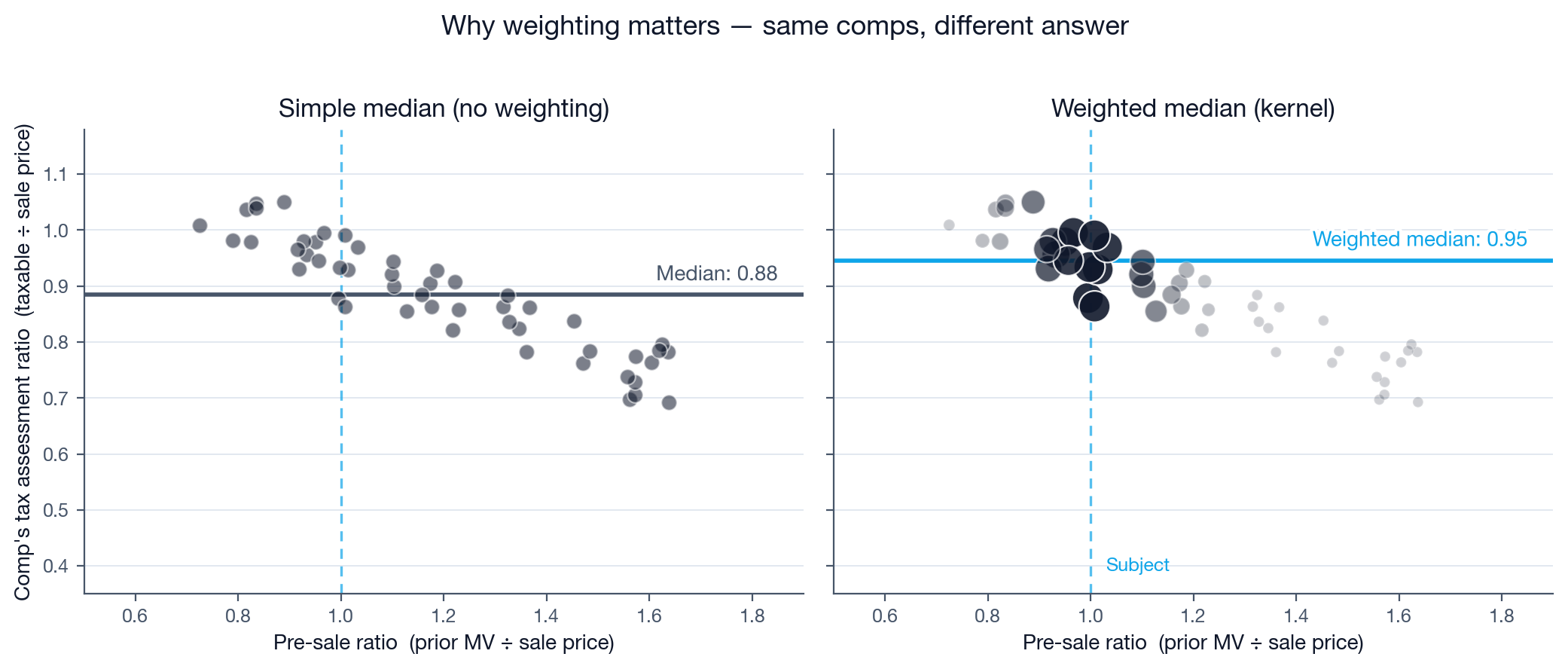

06 Why weighting changes the answer

To turn weighted comps into a single predicted ratio we use the weighted median, not the mean. The median resists one or two unusual sales pulling the prediction off-course; the weights tilt that median toward the comps that actually look like your subject.

How much the kernel improves on a simple median depends on the county. Counties whose post-sale reassessments are uneven — where a stale prior assessment is a strong cue that the parcel will jump after a sale — reward the kernel's locality bias most. In Palm Beach County the kernel cuts median absolute error by 2–4 percentage points across the workhorse asset classes. In Broward County, where post-sale reassessments land much more uniformly close to sale price across the comp pool, the kernel and the simple median produce nearly identical predictions on most classes. The engine's per-county tuning measures this gap from each county's own historical residuals, and the warnings you see on the estimate page reflect it.

07 When the kernel falls back to a simple median

The kernel method requires two things to work: the subject must have a prior-year market value on file, and the comp pool must contain enough sales with the same. When either condition fails, the engine falls back to an unweighted median of comp ratios — what we call flat mode. Whether this represents a meaningful loss of precision depends on the county and asset class.

When the fallback happens in a (county, class) where historical

data shows the kernel beats a simple median, the estimate page

surfaces a

FLAT_MODE_FALLBACK warning whose severity reflects how much the kernel typically

wins by, and whose message quotes the actual empirical gap. Treat

those results as directional rather than precise.

When the fallback happens in a (county, class) where the kernel and a simple median are essentially tied, no warning is raised — flat-mode is just as reliable as kernel-mode in that segment, and a generic warning would be misleading.

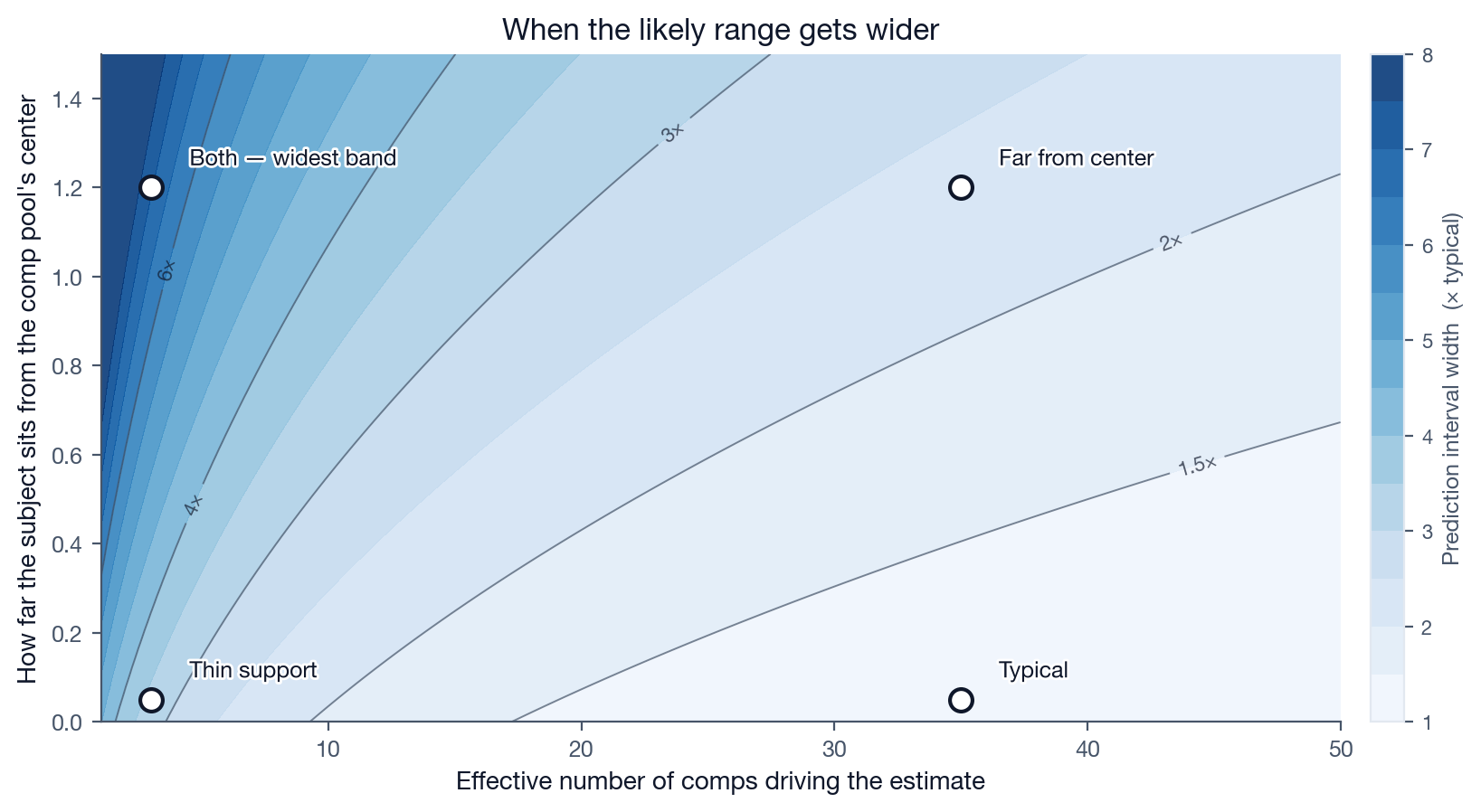

08 The likely range — and why it varies

Every estimate ships with a prediction interval — the "Likely range" shown on the estimate page. It is calibrated against historical residuals so that, on average, the actual tax bill lands inside the range about 90% of the time.

The width of the band is not constant. It scales by two factors that we know empirically predict whether the estimate will be reliable:

- Effective comp support. A subject whose prediction is driven by 30 strongly-similar comps gets a tight band. A subject whose prediction is driven by 2 weakly-similar comps gets a wide one.

- How far the subject sits from the comp pool's center. Subjects whose pre-sale ratio is near 1.0 (prior assessment ≈ sale price) sit in the densely-populated middle of the comp space. Subjects whose pre-sale ratio is far from 1.0 sit in sparse, historically less-predictable territory.

Thin support and far-from-center are multiplicative: a subject that is both gets a much wider band than the sum of either alone. This is the model being honest — when historical data thins out around the subject, the band has to grow to keep its 90% coverage promise.

09 Reading the warnings panel

Most estimates surface zero warnings. When the engine flags something, the warning panel sits directly under the input form and uses a three-tier severity scheme:

Treat the estimate as directional. The model is operating outside its strong-support regime.

The estimate is usable but precision is reduced. Examples: thin comp pool, missing NAV.

Informational. Examples: asset class override applied, relaxed comp window.

The two highest-impact warnings to watch for are

EXTREME_PRE_JV_RATIO (subject sits far from the comp-space center) and

THIN_COMP_SUPPORT (the prediction is driven by very few effective comps). Either

should prompt you to double-check the comp pool yourself before

relying on the headline number.

The cut-offs that decide when these warnings fire are not fixed

numbers baked into the engine — they're computed from each

(county, class)'s historical distributions during the offline

tuning step. "Far from the comp-space center" and "thin

support" mean different things in Palm Beach than they do in

Broward, and the warnings reflect each county's own empirical

regime. Same for

FLAT_MODE_FALLBACK and

HETEROGENEOUS_CLASS: whether they fire — and at what severity — is decided by the

county's own data, not by hard-coded class lists.

10 What this estimator does not do

- It does not apply personal exemptions. Homestead, senior, veteran, and similar exemptions apply to specific owners, not properties. The estimate reflects pre-exemption taxable value.

- It does not predict mid-year reassessments driven by improvements, demolitions, or land-use changes. The pool only sees the previous-year assessment and the post-sale assessment.

- It does not cover residential or vacant land. Eligible asset classes are commercial: office, retail, industrial, multifamily, hospitality, and special-purpose.

- It is not a county valuation model. It learns from observed county behavior, not from the formal valuation formulas the assessor's office uses internally.

Book a 20-minute walkthrough on a property of your choosing and inspect the comp table, warnings, and step-by-step breakdown.

Book a demo